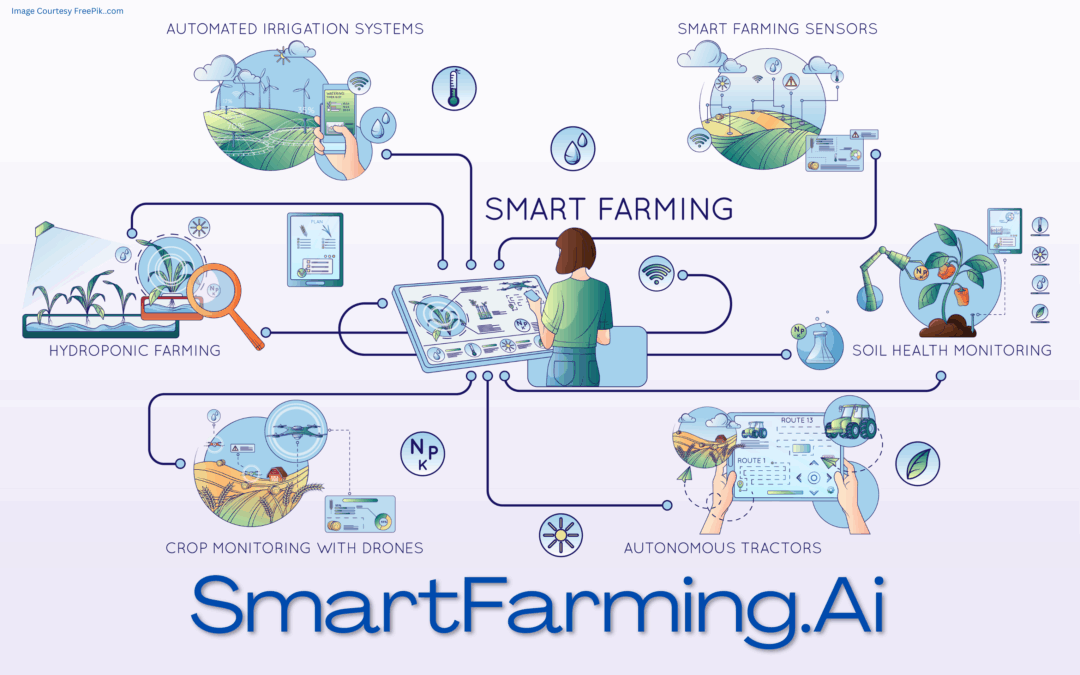

🌾 SmartFarming.Ai – Precision Agriculture for a Smarter Planet

The Following Content is Created by SUM, powered by ChatGPT, and is an Example of the Domain Name’s Potential Usage.

The Domain Name Featured is For Sale. Contact admin@Discovr.Ai to Make an Offer.

🌱 SmartFarming.Ai:Revolutionizing Global Agritech with AI Innovation

📖 Story

Poor data hygiene silently erodes every model, dashboard, and decision it’s connected to. DataHygiene.Ai exists to stop that erosion at the root. Whether you’re training large-scale models, running real-time analytics, or simply integrating APIs across departments, DataHygiene.Ai ensures your systems run on clarity—not corruption. It helps eliminate duplicates, catch logic inconsistencies, and remove the semantic sludge that creeps in as data pipelines scale. With a strong focus on data integrity, data validation, and AI quality control, this platform supports everything from automated data cleansing to pre-deployment audits. In a world obsessed with AI outputs, DataHygiene.Ai reminds us that what goes in matters just as much.

📦 Use Case Scenarios

🧼 Automated Data Cleaning Pipelines

Continuously scan and sanitize datasets before they enter AI or analytics workflows.

💡 Suggested Use: Deploy in real-time ingestion systems to reduce noise, boost structured data, and protect downstream decisions.

🔍 Pre-Model Validation Layers

Verify data quality and flag inconsistencies before training or fine-tuning models.

💡 Suggested Use: Integrate into model ops stacks to enforce data integrity and improve AI quality control.

📊 BI Dashboard Accuracy Enhancers

Improve the accuracy and trustworthiness of business intelligence tools.

💡 Suggested Use: Layer data validation and hygiene logic into dashboard engines to reduce human misreads and reporting errors.

🛠️ Regulatory & Compliance Audit Support

Ensure datasets meet governance standards through automated policy checks.

💡 Suggested Use: Use for GDPR, HIPAA, and ISO-aligned workflows requiring continuous data cleaning and retention controls.

🛠️ System Foundations

🧩 Pluggable API-Led Architecture

Built to work inside existing ETL, MLOps, or analytics stacks with minimal friction.

🌀 Real-Time Signal Purifiers

Eliminates redundant, irrelevant, or misleading entries across pipelines to maintain noise reduction.

🔒 Integrity-First Schema Governance

Applies strict schema controls and column-level validations to preserve data integrity.

⚙️ ML-Ready Cleansing Engines

Optimized for preparing structured data for model ingestion or synthetic generation.

✅ Pros

✔️ Extremely clear brand purpose centered on trust and accuracy

✔️ Perfect fit for enterprise data teams, AI developers, and compliance-heavy industries

✔️ Strong relevance to AI quality control, data validation, and noise reduction

✔️ Supports both real-time systems and pre-deployment audit frameworks

✔️ Clean, intuitive name that’s both literal and expandable in scope

🔮 Future Outlook

The future of AI isn’t just generative—it’s dependable. As companies demand cleaner input streams to match their growing reliance on automated systems, DataHygiene.Ai is positioned to become the silent backbone of trustworthy intelligence. From model prep to audit readiness, platforms focused on data cleaning, data integrity, and structured data will define the next layer of infrastructure behind resilient, explainable, and legally defensible AI systems.

⭐ Final Verdict

★★★★★ (5.0) – A surgically clear brand with scalable potential across AI, enterprise data ops, and compliance tech.

🔖 Hashtags

#datacleaning #dataintegrity #AIqualitycontrol #datavalidation #noisereduction #enterpriseintelligence #structureddata #DiscovrAi

![]()

Logo ™ and © property of respective owners

The Domain Name Featured is For Sale. Contact admin@Discovr.Ai to Make an Offer.